DOI:

https://doi.org/10.64539/msts.v2i1.2026.415Keywords:

Learning outcomes, Assessment metrics, Pedagogy, Generative Artificial Intelligence (GAI), Cognitive engagement, Academic integrityAbstract

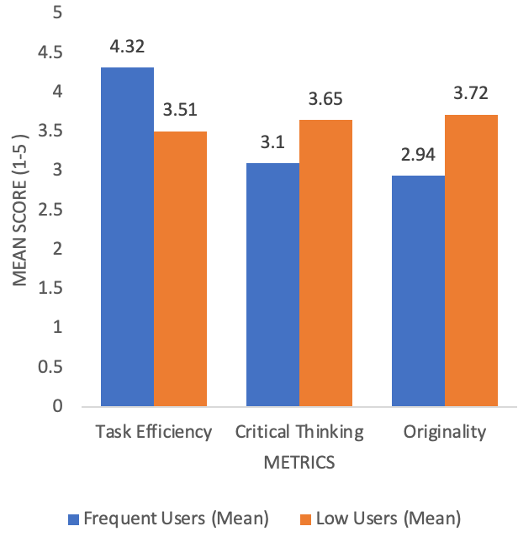

The rapid emergence of Generative Artificial Intelligence (GAI) has transformed the landscape of higher education, influencing pedagogy, assessment, and student learning experiences. Despite its widespread adoption, a significant research gap persists regarding the empirical measurement of its impact on specific learning outcomes. While GAI tools are widely adopted, existing assessment frameworks often fail to distinguish between machine-generated efficiency and genuine cognitive development. This study addresses this gap by developing the Robust Assessment Metrics Framework (RAMF), evaluated through a mixed-methods approach involving students and faculty (N=295) at McPherson University. Quantitative findings reveal a significant "Efficiency-Cognition Trade-off": while frequent GAI usage significantly enhances task efficiency (p < 0.001), it correlates with a statistically significant decline in critical thinking (p < 0.01) and self-reported originality (p < 0.01). Interestingly, regression analysis shows that AI literacy and institutional policy clarity are stronger predictors of academic confidence than usage frequency. This suggests a psychological "confidence paradox" where students feel more capable despite lower cognitive engagement. Qualitatively, thematic analysis highlights a shift toward "shortcut learning" that necessitates a move from product-oriented to process-oriented evaluation. The RAMF introduces expert-validated protocols such as the ‘30/70 Synthesis Rule’ and "Process Logs," to safeguard academic rigor. This research provides institutional leaders with an expert-validated framework proposed for institutional trial to shift from product-oriented to process-oriented assessment in the AI era. By focusing on the interplay between human agency and algorithmic assistance, this research offers broader implications for pedagogical redesign in an AI-saturated academic environment.

References

[1] A. Yusuf, N. Pervin, and M. Román-González, "Generative AI and the future of higher education: a threat to academic integrity or reformation? Evidence from multicultural perspectives," Int. J. Educ. Technol. High. Educ., vol. 21, no. 1, art. no. 21, 2024. https://doi.org/10.1186/s41239-024-00453-6.

[2] D. R. E. Cotton, P. A. Cotton, and J. R. Shipway, "Chatting and cheating: Ensuring academic integrity in the era of ChatGPT," Innovations in Education and Teaching International, vol. 61, no. 2, pp. 228-239, 2023. https://doi.org/10.1080/14703297.2023.2190148.

[3] A. S. Nelson, P. V. Santamaría, J. S. Javens, and M. Ricaurte, "Students’ perceptions of generative artificial intelligence (GenAI) use in academic writing in English as a foreign language," Educ. Sci., vol. 15, no. 5, art. no. 611, 2025. https://doi.org/10.3390/educsci15050611.

[4] A. Alshamy, A. Al-Harthi, and S. Abdulla, "Perceptions of generative AI tools in higher education: Insights from students and academics at Sultan Qaboos University," Educ. Sci., vol. 15, no. 4, art. no. 501, 2025. https://doi.org/10.3390/educsci15040501.

[5] S. Farheen, A. Cheema, R. Ullah, and D. Bandeali, "Equity and bias in AI educational tools: A critical examination of algorithmic decision-making in classrooms," Crit. Rev. Soc. Sci. Stud., vol. 3, no. 3, pp. 67-85, 2025. https://doi.org/10.59075/zqmnpa62.

[6] E. Kasneci et al., "ChatGPT for good? On opportunities and challenges of large language models for education," Learn. Individ. Differ., vol. 103, art. no. 102274, 2023. https://doi.org/10.1016/j.lindif.2023.102274.

[7] R. Stockman, "Generative AI and the end of education," in Proc. Int. Conf. AI Res. (ICAIR), vol. 4, no. 1, 2024, pp. 390–397. https://doi.org/10.34190/icair.4.1.3155.

[8] S. Ahmad, A. Ahmed, S. M. Bhutta, and A. N. Ansari, "Transforming teaching learning with chatbots in higher education: Quest, opportunities and challenges for quality enhancement," in The Evolution of Artificial Intelligence in Higher Education: Challenges, Risks, and Ethical Considerations, 2024. https://doi.org/10.1108/978-1-83549-486-820241007.

[9] J. Biggs, C. Tang, and G. Kennedy, Teaching for Quality Learning at University, 5th ed. Maidenhead, U.K.: Open Univ. Press, 2023. https://books.google.co.id/books?id=pseVEAAAQBAJ.

[10] F. Eling and A. Ogwal, "Bridging learning gaps: The role of AI-powered technologies in enhancing quality education," Int. J. Res. Innov. Soc. Sci., vol. 9, no. 3, pp. 2011–2016, 2025. https://doi.org/10.47772/IJRISS.2025.903SEDU0155.

[11] J. W. Creswell and V. L. Plano Clark, Designing and Conducting Mixed Methods Research, 3th ed. Thousand Oaks, CA, USA: SAGE, 2017. https://books.google.co.id/books?id=eTwmDwAAQBAJ.

[12] X. Weng, Q. Xia, M. Gu, K. Rajaram, and T. K. Chiu, "Assessment and learning outcomes for generative AI in higher education: A scoping review on current research status and trends," Australas. J. Educ. Technol., vol. 40, no. 6, pp. 37–55, 2024. https://doi.org/10.14742/ajet.9540.

[13] B. Ogunleye, K. I. Zakariyyah, O. Ajao, O. Olayinka, and H. Sharma, "A systematic review of generative AI for teaching and learning practice," Educ. Sci., vol. 14, no. 6, art. no. 636, 2024. https://doi.org/10.3390/educsci14060636.

[14] T. Feng, "ChatGPT's impact on data science students learning performance: A systematic review and prospects," J. Educ. Educ. Policy Stud., vol. 3, no. 1, pp. 1–8, 2024. https://doi.org/10.54254/3049-7248/2024.19048.

[15] L. Gao, "Impact of ChatGPT on academic integrity and assessment effectiveness for e-learning in higher education: The need for redesigning assessment practices," Commun. Humanit. Res., vol. 45, pp. 40–44, 2024. https://doi.org/10.54254/2753-7064/45/20240084.

[16] K. Žáková, D. Urbano, R. Cruz-Correia, J. L. Guzmán, and J. Matišák, "Exploring student and teacher perspectives on ChatGPT’s impact in higher education," Educ. Inf. Tech., vol. 30, pp. 649–692, 2024. https://doi.org/10.1007/s10639-024-13184-y.

[17] R. Deng, M. Jiang, X. Yu, Y. Lu, and S. Liu, "Does ChatGPT enhance student learning? A systematic review and meta-analysis of experimental studies," Comput. Educ., vol. 227, art. no. 105224, 2025. https://doi.org/10.1016/j.compedu.2024.105224.

[18] J. Roe, M. Perkins, and D. Ruelle, "Understanding student and academic staff perceptions of AI use in assessment and feedback," arXiv preprint arXiv:2406.15808, 2024. https://doi.org/10.48550/arXiv.2406.15808.

[19] B. Borges et al., "Could ChatGPT get an engineering degree? Evaluating higher education vulnerability to AI assistants," Proc. Natl. Acad. Sci. U.S.A., vol. 121, no. 49, art. no. e2414955121, 2024. https://doi.org/10.1073/pnas.2414955121.

[20] M. Morrone, "Report: Educators are turning to AI, even for grading," Axios, Aug. 26, 2025. [Online]. Available: https://www.axios.com/2025/08/26/educators-ai-grading-report [Accessed: Oct. 24, 2025].

[21] F. D. Davis, "Perceived usefulness, perceived ease of use, and user acceptance of information technology," MIS Quart., vol. 13, no. 3, pp. 319–340, Sep. 1989. https://doi.org/10.2307/249008.

[22] V. Braun and V. Clarke, "Using thematic analysis in psychology," Qual. Res. Psychol., vol. 3, no. 2, pp. 77–101, 2006. https://doi.org/10.1191/1478088706qp063oa.

[23] M. R. Lynn, "Determination and quantification of content validity," Nurs. Res., vol. 35, no. 6, pp. 382–386, 1986. https://doi.org/10.1097/00006199-198611000-00017.